What is Omitted Variable Bias?

Omitted variable bias (OVB) occurs when a regression model excludes a relevant variable. The absence of these critical variables can skew the estimated relationships between variables in the model, potentially leading to erroneous interpretations. This bias can exaggerate, mask, or entirely flip the direction of the estimated relationship between an independent and dependent variable.

Omitted variable bias is insidious! In the regression context, variables that aren’t in the model can bias the results for those in it! How does that happen?

For an omitted variable to bias the results, it must correlate with the dependent variable and at least one independent variable, making it a confounding variable. When this correlation structure exists, it forces the statistical procedure to attribute the effects of the omitted variable to variables in the model, distorting the genuine relationship.

The diagram below illustrates the conditions that cause omitted variable bias. There must be non-zero correlations (r) on all three sides of the triangle. X1 is an independent variable in the model, while X2 is omitted. Y is the dependent variable. Learn more about Correlations.

The degree of bias hinges on the collective strength of these correlations. Stronger correlations produce more bias. With weaker relationships, the bias might not be severe. The omitted variable won’t bias the results if any correlations are zero.

Understanding and addressing omitted variable bias is crucial for researchers and analysts to draw trustworthy conclusions from their regression models.

In this post, you’ll learn about omitted variable bias, see an example, learn how it occurs, and how to detect and avoid it.

Related post: Identifying Independent and Dependent Variables

Omitted Variable Bias Example

In a biomechanics lab, we studied the impact of physical activity on bone density, measuring variables like activity levels, weights, and bone densities. Theory suggests a positive link between activity and bone density. Using regression analysis, I found no relationship between these variables, contradicting established theories.

This unexpected result was due to omitted variable bias. Initially, I only included activity level in the model, overlooking a crucial variable: the subject’s weight. When I added weight to the analysis, both activity and weight showed significant, positive correlations with bone density.

The diagram shows that the correlation structure can produce omitted variable bias because all three sides have significant associations. Let’s see how leaving weight out of the model hid the positive relationship between activity and bone density.

The correlation pattern creates two opposite effects of activity on bone density. Higher activity increases density directly. However, active subjects tend to weigh less, which in turn reduces the density.

When I fit the regression model with only activity, the model had to assign both opposing effects to activity alone. Hence, there was no apparent relationship initially. However, the model with both activity and weight could correctly allocate both effects.

Next, I’ll go into a more statistical explanation for omitted variable bias from an assumption violation perspective.

Related post: Specifying the Correct Regression Model

Violating an OLS Residuals Assumption

When you satisfy the ordinary least squares (OLS) assumptions, the Gauss-Markov theorem states that your estimates will be unbiased and have minimum variance.

However, omitted variable bias occurs because the residuals violate one of the assumptions. You need to follow a chain of events to see how this works.

Suppose you have a regression model with two significant independent variables, X1 and X2. These independent variables correlate with each other and the dependent variable—which are the requirements for omitted variable bias.

Now, imagine that we take variable X2 out of the model. It is the confounding variable. Here’s what happens to cause omitted variable bias:

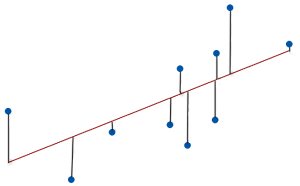

- The model fits the data less well because we’ve removed a significant explanatory variable. Consequently, the gap between the observed values and the fitted values increases. These gaps are the residuals.

- The degree to which each residual increases depends on the relationship between X2 and the dependent variable. Consequently, the residuals correlate with X2.

- X1 correlates with X2, and X2 correlates with the residuals. Ergo, variable X1 correlates with the residuals.

- Hence, this condition violates the ordinary least squares assumption that independent variables in the model do not correlate with the residuals. Violations of this assumption produce biased estimates.

This explanation serves a purpose in the next section!

The important takeaways are that omitted variables reduce the goodness-of-fit (larger residuals) and can bias the coefficient estimates.

Related posts: 7 Classical OLS Assumptions and Check Your Residual Plots

How to Detect OVB

You saw one method for detecting omitted variable bias in this post. If you include different combinations of independent variables in the model and see the coefficients changing, you’re watching omitted variable bias in action!

In this post, I started with a regression model that had activity as the lone independent variable and bone density as the dependent variable. After adding weight to the model, the correlation changed from zero to positive.

However, if we don’t have the data, it can be harder to detect omitted variable bias. If my study hadn’t collected the weight data, the answer would not be as clear.

I presented a clue in the previous section. For omitted variable bias to exist, a confounding variable must correlate with the residuals. Consequently, we can plot the residuals by the variables in our model. If we see a relationship in the plot rather than a random scatter, it tells us there is a problem and points us toward the solution. We know which independent variable correlates with the confounding variable.

Another step is to consider theory and other studies carefully. Ask yourself several questions:

- Do the coefficient estimates match the theoretical signs and magnitudes? If not, you need to investigate. That was my first tip-off!

- Can you think of confounding variables that likely correlate with the dependent variable and at least one independent variable? Reviewing the literature, consulting experts, and brainstorming sessions can illuminate this possibility.

How to Avoid Omitted Variable Bias

Before you begin your study, arm yourself with all the possible background information you can gather. Research the study area, review the literature, and consult with experts. This process enables you to identify and measure potential confounding variables that you should include in your model. Sometimes, you can set control variables in an experiment to combat omitted variable bias. These approaches help you avoid the problem in the first place.

Imagine collecting all your data and then realizing that you didn’t measure a critical variable. That’s an expensive mistake!

If you absolutely cannot include a confounding variable and it causes OVB, consider using a proxy variable. Typically, proxy variables are easy to measure, and analysts use them instead of variables that are either impossible or difficult to measure. The proxy variable can be a characteristic that is not important itself but has a good correlation with the confounding variable.

These variables allow you to include some of the information in your model that would otherwise be impossible, thereby reducing omitted variable bias. For example, if it is crucial to incorporate historical climate data in your model, but those data do not exist, you might include tree ring widths instead.

Learn more about Proxy Variables.

If you aren’t careful, the hidden hazards of omitted variable bias can completely flip the results of your regression analysis!

Reference

Lopes, H. F. (2016, September 21). Omitted Variable Bias: The Simple Case. Hedibert.

Hi Jim ,

I just got your page while reading some article , i read through may of them and the way you explained makes me understand and makes me make sense of all these things.

Before I came across your website , i was pretty overwhelmed and frustrated looking the equations all over the place .

I think they should learn from you , even if those crazzy equation are required or needed for unversity sure they can have them but first must include intuitive explanation like you did .

Now i have one Question :

I am an in progress Data sci learner , My question to you is :

I am considering buying your books , At one hand i like your explanation at other hand I am worried if intuitive explanation with less formula will help me or not to work in the job as DS .

FYI I my only objective is to make prediction in commercial project settings nothing university stuff here . .

can you give me some assuring words or caviouts if any , do i really need to make sense of those crazzy formula that we see all over in statistics . .to be successful as a data sci